💡 Key Takeaways

- Core Logic: AI noise reduction utilizes "Source Separation" via deep learning, moving beyond traditional ENC’s simple frequency subtraction.

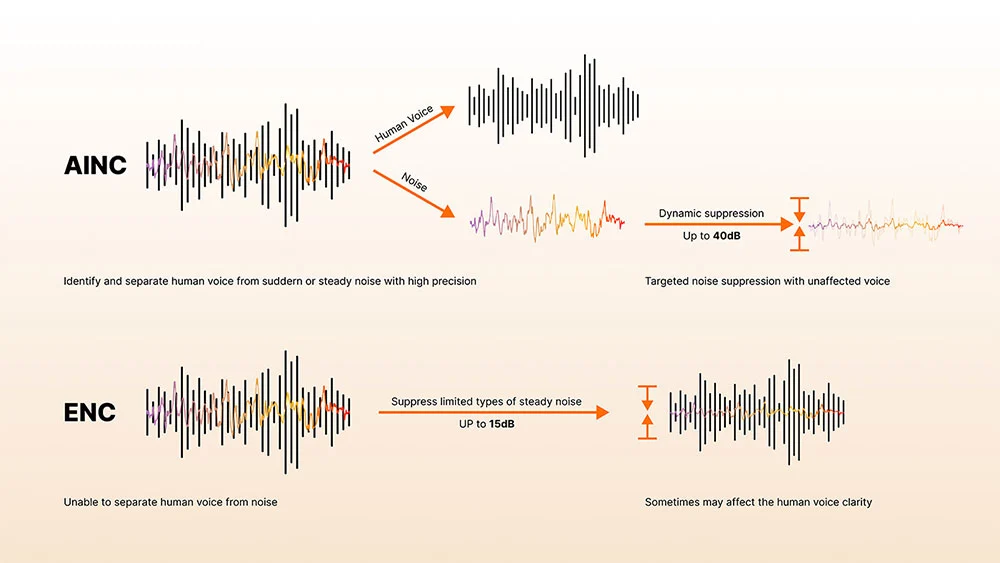

- Performance Leap: Compared to traditional 15dB steady-state reduction, AI algorithms achieve up to 40dB dynamic suppression, significantly enhancing Video Content Creation quality.

- Core Value: By recognizing unique "voice fingerprints," AI identifies and isolates human speech while suppressing non-stationary noises like sirens or background chatter.

- Processing Trends: The rise of On-device AI chips eliminates high latency, a critical pain point for Live Streaming and Remote Meetings.

Whether in Podcast Recording, remote meetings, or daily communication, clear and pure audio is the key to effective connection. However, the real world is filled with noise—the bustle of a café, street traffic, and the clatter of office keyboards—all acting as "invisible killers" of audio quality. For a long time, users of wireless microphones have sought effective solutions, but traditional technology often struggles to balance noise suppression with natural vocal fidelity in complex environments.

Today, the intervention of Artificial Intelligence (AI) is significantly changing our understanding of "noise reduction." It is no longer just about "erasing" noise but elevating audio processing to a new dimension of "understanding" sound, delivering an unprecedentedly pure listening experience.

Why Traditional ENC Struggles in Real-World Environments

How it Works

When mentioning noise reduction, many think of Environmental Noise Cancellation (ENC) or Digital Signal Processing (DSP). These technologies have played a vital role for decades. Their working principle is straightforward: microphones capture ambient noise, and preset algorithms or filters identify and "subtract" specific frequency ranges from the signal.

In the audio world, Spectral Subtraction and Wiener Filtering are two common traditional techniques. They primarily target "stationary" noise—consistent sounds like the hum of a fan or air conditioner. By analyzing the spectral characteristics of these steady noises, the system attempts to deduct the corresponding noise spectrum from the overall audio signal.

Why Traditional Noise Reduction Can’t Separate Voice from Noise

While effective for steady noise, the "subtraction" philosophy has significant limits. From a technical perspective, these technologies cannot truly "understand" the source of a sound or distinguish between the target voice and interfering noise. They simply perform passive frequency filtering on the entire signal.

This "one-size-fits-all" approach fails in complex, real-world environments:

- Inability to handle non-stationary noise: Traditional methods struggle with sudden, fast-changing sounds like keyboard clicks, car horns, or multiple people talking in the background.

- The cost of vocal damage: Because they cannot precisely separate voice from noise, traditional filters often "injure" the harmonic structure of the human voice. This results in thin, muffled audio, often described as "robotic" or "underwater" sound—an unacceptable compromise for high-quality content creators.

Put simply, traditional noise reduction is like a "rough pruning"—it can cut away obvious weeds but struggles to clear debris without harming the main plant (the voice). It is this "subtraction dilemma" that has allowed AI noise cancellation to truly shine.

How AI Noise Cancellation Works: From Filtering to Voice Separation

If traditional reduction is "subtraction," AI noise cancellation is a key technological evolution. It no longer settles for simple filtering but achieves intelligent "understanding" and "separation" through deep learning, suppressing noise while preserving vocal integrity.

The Underlying Logic: Deep Learning and Source Separation

The core of AI noise reduction lies in a fundamental shift: moving from rule-based signal processing to intelligent learning based on Deep Neural Network (DNN) models. AI systems are trained on massive datasets of human voices and noises—covering various languages, accents, and environments—to master the complex patterns that distinguish them.

The focus of AI learning is "what is a human voice." It precisely identifies unique harmonic structures, pitch, and speech patterns, acting much like a "voice fingerprint." From a technical perspective, this makes AI noise reduction closer to Source Separation—intelligently "deconstructing" a mixed audio stream into independent voice and background noise layers.

Inside AI Noise Cancellation: How It Identifies and Removes Noise

AI noise reduction is a highly intelligent multi-stage process:

- Voice Pattern Recognition: The model analyzes the input signal in real-time to identify complex patterns belonging to human speech, including fundamental frequencies and resonances.

- Real-Time Classification: Within milliseconds, the AI accurately classifies segments as either target voice or background noise.

- Selective Suppression: Once classified, the system applies Dynamic Suppression only to the background noise while leaving the target voice untouched. This is crucial for Live Streaming, ensuring the speaker remains the focus.

What “Deep Noise Reduction” Really Means for Audio Quality

Decibels (dB) are the key metric. Moving from traditional 15dB to AI's 40dB is a logarithmic leap. While AI significantly reduces artifacts like "underwater" sound common in traditional algorithms, slight artifacts may still occur under extreme suppression levels. However, through intelligent compensation, AI ensures the voice remains clear and natural, achieving a balance between quantitative suppression and audio protection.

The Evolution of AI "Hearing": Empowering Real-Time Creation

Conquering Non-stationary Noise

Real-world noise is often dynamic and unpredictable (Non-stationary Noise), such as wind during Video Content Creation, traffic horns, or clattering dishes. Traditional tech lacks the real-time insight to handle these patterns. AI algorithms, however, possess powerful real-time inference capabilities, continuously analyzing the audio stream to respond instantly to unpredictable transient noises, ensuring the voice stays prominent.

Focusing on the Core Message

There is a clear distinction between Voice Isolation and traditional ENC. While ENC lowers overall ambient volume, AI-driven Voice Isolation precisely extracts the target voice from a complex sound field. This is invaluable for Remote Meetings, Podcast Recording, and field interviews, allowing the speaker to stand out even if others are talking in the background.

The Rise of On-Device AI

The advancement of On-device AI chips has broken previous processing bottlenecks for wireless mics. Modern audio devices integrate dedicated AI processors to run complex algorithms locally. This means high-performance noise reduction can occur with ultra-low latency, meeting the demands of live broadcasts and real-time calls.

How AI Noise Cancellation Improves Recording and Workflow Efficiency

From "Fix it in Post" to "Pro on the Go"

For Video Content Creation, post-production audio cleaning is exhausting. From a technical standpoint, high-quality AI output drastically reduces the manual labor of fixing vocal distortion. This efficiency allows creators to focus on content, making "Live-to-Post" a reality and expanding creative boundaries.

Combining AI with Good Recording Habits

While powerful, AI is not "magic." Professionals know that no technology replaces a solid recording foundation. AI noise reduction is a tool to improve signal quality, but it cannot replace good wireless mic recording habits.

- Microphone Placement: Ensure the mic faces the source at an appropriate distance.

- Wind Protection: Use windscreens outdoors to reduce physical interference.

- Gain Control: Properly set gain to avoid clipping or excessive floor noise.

As the industry saying goes: "AI improves signal, but doesn’t fix bad recording." AI is the "cherry on top," not a substitute for professional care.

Saramonic Air SE — Bringing Professional AI Noise Cancellation to Your Pocket

If AI Noise Cancellation is the "brain" of modern audio processing, the Saramonic Air SE is the ultimate manifestation of this technology in a miniaturized form.

Integrated AI Chip: A Breakthrough in Hardware-Level Processing

Unlike entry-level wireless microphones that rely on basic software filters, the Air SE is powered by a true On-device AI processor. This algorithm has been trained on over 700,000 noise samples across 20,000+ hours of deep learning. This allows the wireless mic to act like a human brain—identifying and isolating "voice fingerprints" from complex backgrounds in real-time, delivering an industry-leading 40dB noise reduction depth.

Dual-Mode Intelligent Noise Cancellation: Precision for Every Scenario

The Air SE offers a 2-level AI noise cancellation system, giving Vloggers and Live Streamers total control over their sonic environment:

- Light Mode: Perfect for indoor settings, neutralizing subtle hums from fans or AC units while maintaining a natural ambient feel.

- Strong Mode: Engineered for extreme outdoor conditions. Whether it's heavy traffic, howling wind, or construction clamor, this mode ensures your voice remains crystal clear even in the most chaotic acoustic environments.

5g Ultra-Lightweight Design: Power Without the Bulk

Despite its powerful AI capabilities, the Air SE transmitter weighs a mere 5g. For creators looking for a best-in-class vlog microphone, the Air SE offers the perfect balance of portability and professional-grade AI audio isolation. You won't feel it on your collar, but you will definitely hear the difference in your recordings.

Conclusion

The development of AI noise reduction marks a technological evolution from "noise removal" to "sound understanding." It empowers every user to communicate with confidence and clarity anywhere in the world. As processing power continues to grow, AI noise cancellation will shift from a premium feature to a standard for all modern wireless microphones.

To learn more about the latest news from Saramonic, join in our official social media: Facebook, Youtube, Instagram, X, Facebook Group.

Frequently Asked Questions

What is AI noise reduction?

AI noise reduction is a technology based on deep learning models (like neural networks) that identifies and separates human voices from ambient noise by training on massive datasets.

Is AI noise cancellation better than ENC?

Yes. Technically, ENC targets steady background hums with ~15dB reduction, whereas AI handles complex, non-stationary noises with up to 40dB suppression and better vocal protection.

Does AI noise reduction affect voice quality?

High-quality AI significantly improves clarity. However, at extreme suppression levels, slight artifacts may occur, which is why professional recording environments are still recommended.

Can AI remove background voices?

Yes. Advanced Voice Isolation can recognize a specific speaker’s "fingerprint" and suppress other voices in the background, which is highly effective for Remote Meetings.